AI is Redesigning Storage Architectures

Fred Moore’s Library of Congress Presentation Reveals Critical Infrastructure Challenges Ahead

By Fred Moore

Principle at Horison Information Strategies and Technical Advisor to the LTO Show

The storage industry stands at a critical fork in the road. As artificial intelligence workloads explode and data creation accelerates toward 175 zettabytes by year-end 2025, the underlying infrastructure that has supported digital growth for decades is showing dangerous signs of strain.

This was the stark message delivered by Fred Moore, President of Horison Information Strategies, at the Library of Congress’s “Designing Storage Architectures for Digital Collections” conference in Washington, DC.

The Vertical Market Failure: When Infrastructure Can’t Keep Pace

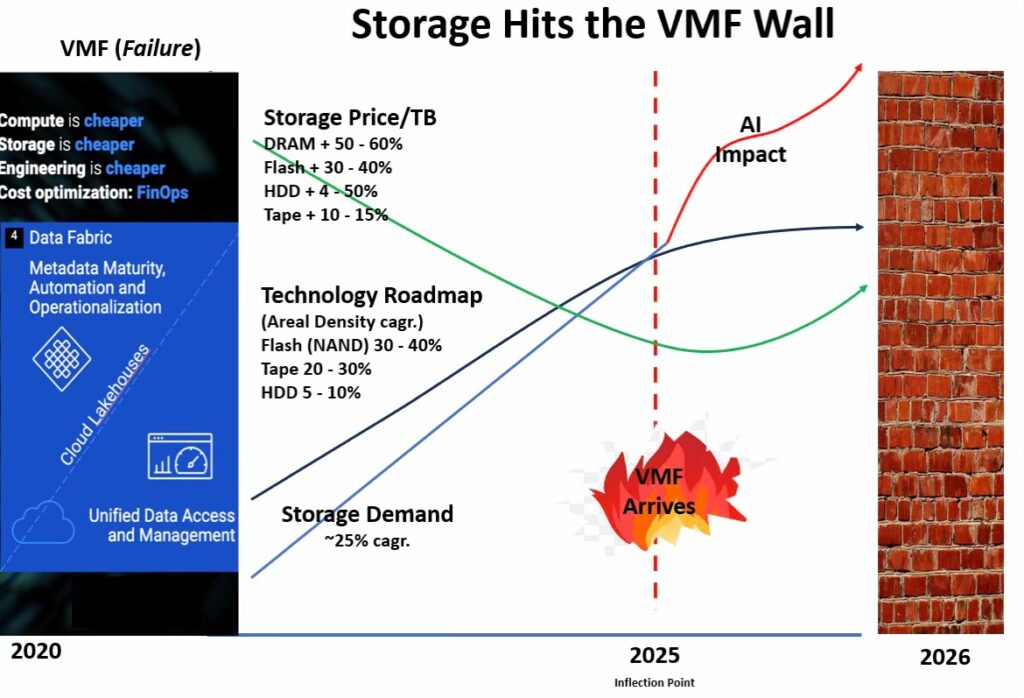

Moore introduced a concept that should concern every data center operator, CIO, and storage professional: the Vertical Market Failure (VMF). This occurs when the underlying infrastructure fails to meet the demands of the market it serves—and according to Moore’s analysis, we’re living through one right now.

“Current HDD and tape development roadmap progress has fallen behind projected storage specifications and increasing demand,” Moore explained. “The VMF has created mounting storage supply shortages and higher prices across the board.”

The numbers paint a sobering picture. Storage prices have surged across all tiers:

DRAM: +50–60%

Flash: +30–40%

HDD: +4–50%

Tape: +10–15%

Meanwhile, Fred Moore points to another highly critical observation; technology roadmap progress measured by areal density compound annual growth rates (CAGR) tells an equally concerning story.

Flash areal density (NAND) is advancing at 30–40% CAGR, tape at 20–30%, but HDDs—the workhorse of nearline storage—are crawling forward at just 5–10% CAGR.

Against storage demand growing at approximately 25% CAGR, the math simply doesn’t work.

AI: The Game-Changer Reshaping Everything

The catalyst for this crisis? Artificial Intelligence.

But Moore emphasized that AI isn’t just another application consuming storage—it’s fundamentally redesigning how we think about data access patterns, infrastructure requirements, and even electrical grids.

Consider the Stargate data center, a $500 billion joint venture between OpenAI, Oracle, and Softbank being built on 1,200 acres in Abilene, Texas.

This single facility could consume more than 900 megawatt-hours of electricity and house over 450,000 GPUs.

To put that in perspective, AI workloads already use more energy than entire nations including Ireland, Denmark, Finland, New Zealand, and Chile combined.

By 2028, US data centers are projected to use 4.4% of the nation’s electrical output and 12% of total consumption.

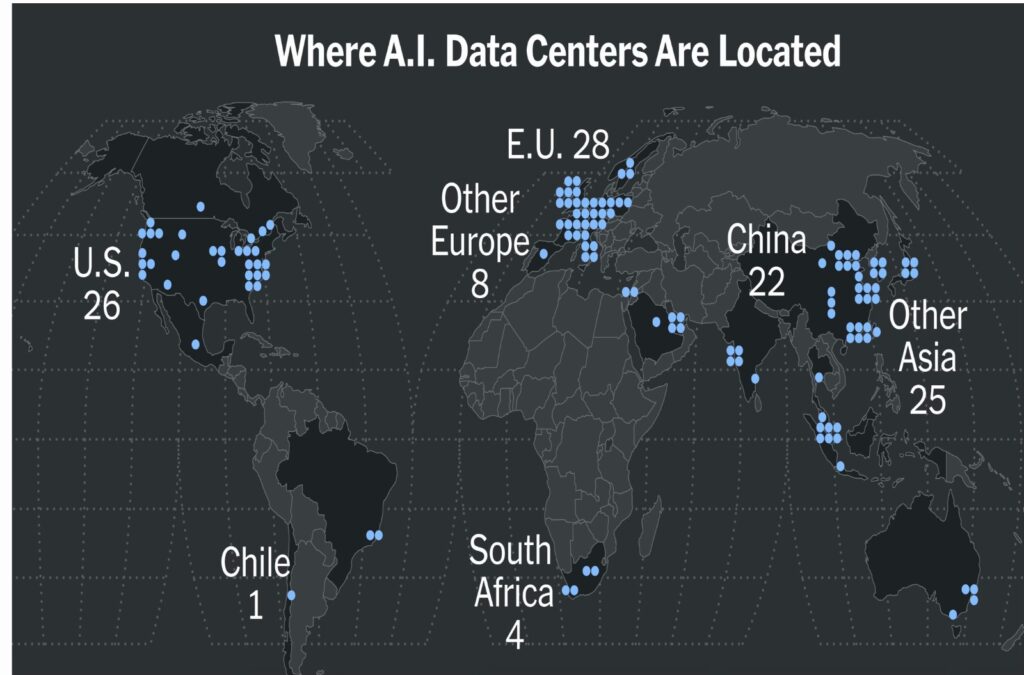

We’re witnessing the emergence of a worldwide digital divide—not between those with internet access, but between nations with compute power and those without it.

The Dynamic Archive: A New Paradigm

Perhaps Moore’s most important insight was the introduction of the “Dynamic Archive” concept—a fundamental shift from traditional data lifecycle assumptions.

Historically, data followed a predictable pattern:

• Hot when created

• Cooling to warm within weeks

• Settling into cold archival status within 90–120 days

The probability of access declined steadily as data aged. Storage architectures were designed around this predictability.

AI has shattered that model.

“Cold archival data can become hot and stay hot for variable time periods as the value of data changes,” Moore noted.

AI training and inference workloads don’t respect traditional data temperature classifications. A dataset dormant for years might suddenly become critical for training a new model, requiring sustained high-performance access for weeks or months before returning to cold storage.

This creates what Moore calls the Dynamic Archive, where data doesn’t permanently settle into temperature tiers but moves fluidly between hot, warm, and cold states based on AI-driven analytics, compliance needs, or unexpected access patterns.

Memory and Storage: The New Bottleneck

The presentation highlighted a critical distinction in how AI consumes resources compared to traditional workloads.

A Google search uses approximately 0.5 MB of DRAM and completes in milliseconds.

An AI chat prompt can occupy 1 TB of DRAM for seconds or minutes.

“AI occupies memory for significantly longer time,” Moore emphasized.

“Memory and storage demands outstrip supply—prices rise.”

By 2030, the AI sector could consume up to 1,000 terawatt-hours per year, with inference alone accounting for more than 80% of all AI energy consumption.

Water scarcity adds another dimension to the crisis, with global AI demand projected to reach 4–7 billion cubic kilometers of water in 2027.

The Supply Chain Vulnerability

Moore’s analysis revealed a troubling concentration of suppliers across the storage ecosystem.

Tape Drives

IBM is the only developer and supplier controlling the entire tape ecosystem specifications.

Tape Media

Fujifilm and Sony are the only LTO suppliers.

HDDs

Seagate, Toshiba, and Western Digital are the only remaining suppliers.

Tape Libraries

HPE, IBM, Quantum, and Spectra are the primary large-scale library suppliers.

This consolidation creates vulnerability. Any disruption in this tightly coupled supply chain reverberates across the entire industry.

Redesigning Storage Architectures: The Path Forward

Moore outlined critical requirements for next-generation secondary storage architectures serving the estimated 6.4 zettabytes of secondary storage capacity installed by 2025.

Price Optimization

• Lowest $/TB

• Minimized Total Cost of Ownership (TCO)

• Optimized floorspace

• Easy installation

• Self-maintenance capabilities

Performance Requirements

• Fast access times

• High data rates

• Support for both random and sequential access

• Intelligent robotics

• Ability to handle both hot and cold workloads without data movement between tiers

Capacity and Scalability

• Seamless scaling

• Rack-scale design

• Sustainable roadmaps

• Legacy emulation

Reliability

• Bit error rates of 10^20 or better

• Long-life media

• Resilience to temperature, humidity, radiation, EMP, and saltwater

Security

• Air-gap capabilities

• Encryption

• WORM functionality

• Removable media

• Physical vault options

Sustainability

• Low CO₂ emissions

• Minimal power and water consumption

• Reduced electronic waste

• Reliable energy sources

The Question of New Technologies

Moore posed the industry’s most pressing question:

“Will a new novel technology or solution ever arrive?”

The presentation showcased emerging possibilities including:

• DNA molecular storage

• Photonics

• Glass nano-layers

• Ceramics

• Nanostructure solutions

• 3D-refraction

• Quantum storage

• Celestial archives in space

But the timeline for commercial viability remains uncertain.

The Three-Tier Secondary Storage Model

For the immediate future, Moore advocates for a three-tier secondary storage architecture.

Active Archive (WORM – Write Once, Read Many)

Nearline HDD or SSD for frequently accessed archival data.

Archive (WORSe – Write Once, Read Seldom)

Robotic tape libraries, nearline HDD, and emerging technologies.

Deep Archive (WORN – Write Once, Read Never)

Advanced tape, ceramics, photonics, DNA, 3D, glass, holographic, and future technologies.

The critical question remains:

“Can one technology do it all?”

Moore’s answer appears to be no—the future requires a heterogeneous approach optimized for different access patterns and retention requirements.

A Call to Action

Moore’s presentation concluded with an urgent question:

“Can the VMF become a VMR (Vertical Market Recovery)?”

The answer depends on whether storage R&D and technology roadmaps can accelerate to meet demand.

Higher storage prices mean higher revenues, but the key question remains whether companies will invest those returns in technology advancement or capital returns to shareholders.

For the tape and broader storage industry, the message is clear:

Business as usual won’t suffice.

The AI-driven zettabyte era demands a fundamental rethinking of storage architectures, supply chains, and technology roadmaps.

As Moore pointedly noted:

“No Data = No AI!”

The future of artificial intelligence—and the digital economy it powers—depends on solving the storage crisis we face today.

About the Author

Fred Moore is a Technical Advisor to the LTO Show and principle at Horison Information Strategies in Boulder, Colorado, a data storage industry analyst and consulting firm that specializes in keynote speaking, executive briefings, marketing strategy, and business development for end-users and storage hardware and software suppliers.

Please reach out with story ideas or comments, we’ll respond to each directly.

Email: pete@ltoshow.com

Copyright 2026 The LTO Show and Pete Paisley

Linear Tape-Open LTO, the LTO logo, Ultrium, and the Ultrium logo are registered trademarks of Hewlett Packard Enterprise, IBM, and Quantum in the US and other countries. All product and company names are trademarks™ or registered® trademarks of their respective holders. Use of them does not imply any affiliation with or endorsement by them.